Stone 1974 is referenced in:

Michaelsen J. 1987. Cross-validation in statistical climate forecast models. J Climate Applied Meteorology, 26:1589-1600

1520-0450(1987)026-1589-cviscf-2.0.co;2.pdf

Set  consists of predictions and targets

consists of predictions and targets

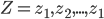

A set of prediction rule  will be used to predict y0 from

will be used to predict y0 from

Let  be the accuracy.

be the accuracy.

by least squares this will usually

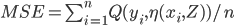

in other words expected Err is

![Err= E[Q(y_{i},\eta(x_{0},Z))]](http://lakm.us/thesit/wp-content/uploads/eq_a3a3253210769351b42ecc2c29b07f59.png)

MSE

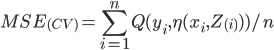

In cross validation